AI is transforming content compliance by automating checks for legal, regulatory, and brand standards across platforms. Here’s what you need to know:

- Why It Matters: Non-compliant content can lead to penalties, like the $48.6M FTC settlement in 2026. AI ensures faster, real-time compliance.

- Key Tools:

- NLP (Natural Language Processing): Flags risky words and ensures required terms are included.

- Computer Vision: Scans images/videos for issues like unlicensed logos or missing disclaimers.

- Hybrid Systems: Combines AI and rule-based checks for improved accuracy.

- Major Regulations: Stay updated on laws like FTC guidelines, CAN-SPAM, and new rules like NY’s Synthetic Performer Law (effective June 2026).

- Challenges: Risks include AI bias, hallucinations, and unauthorized tools. Regular audits and human oversight are critical.

AI can significantly reduce review times and errors, but human expertise remains essential for nuanced decisions. Combining both creates a reliable compliance system.

Using artificial intelligence to automate content moderation and compliance with AWS services

sbb-itb-c00c5b1

How AI Algorithms Handle Content Compliance

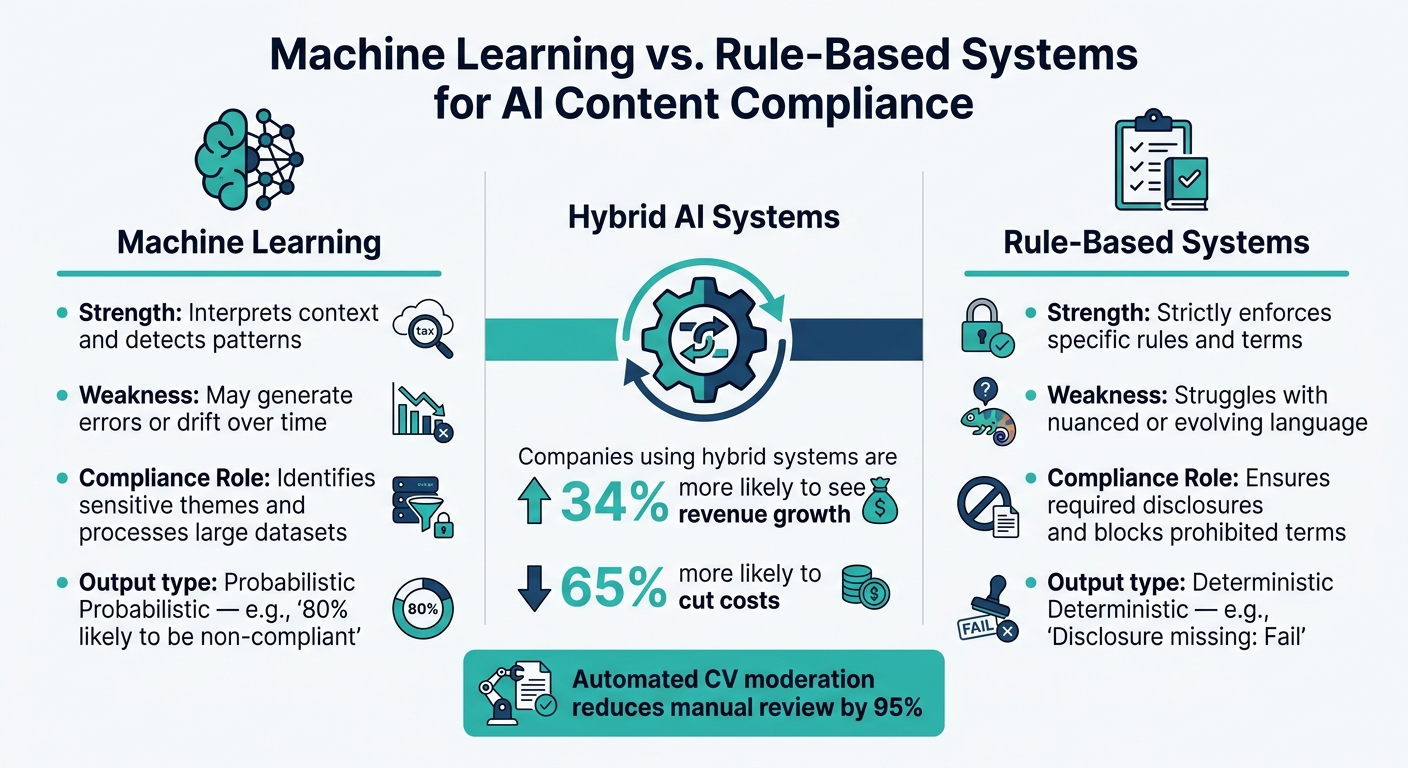

Machine Learning vs. Rule-Based Systems for AI Content Compliance

Natural Language Processing (NLP) for Text Analysis

NLP plays a central role in ensuring compliance for text-based content. It works by analyzing text much like a human reviewer but at a scale that humans simply can’t match. A key tool in its arsenal is the use of "deny lists", which scan for high-risk or prohibited words. For instance, in the pharmaceutical industry, NLP systems might flag the word "cure" and suggest more compliant alternatives like "treats" or "alleviates symptoms." This approach extends to other regulated language, like financial disclaimers or health claims.

But NLP doesn’t just stop at identifying problematic language – it also ensures that required terms or phrases are included. For example, if a crypto investment ad is missing a mandatory risk warning, the system can add it automatically before the ad goes live. This approach integrates compliance checks directly into the content creation process, a method often referred to as "compliance as code."

"AI models don’t go to law school. They don’t ‘know’ regulations. They simply predict the next word in a sentence." – Charlotte Baxter-Read, Lead Marketing Manager, Markup AI [3]

That said, NLP systems rely heavily on pre-programmed rules. If these rules are unclear or outdated, the system might miss violations or flag content incorrectly.

Computer Vision for Image and Video Compliance

Images and videos bring their own set of compliance challenges. Issues like explicit content, unlicensed logos, hate symbols, or embedded text that violates disclosure rules need specialized tools. This is where computer vision (CV) steps in, scanning visual content as soon as it’s uploaded.

For videos, CV often uses frame sampling, analyzing one frame every 15–30 seconds instead of reviewing every single frame. Even a single non-compliant frame can trigger a block. CV also employs Optical Character Recognition (OCR) to detect text embedded in images, allowing it to verify disclaimers or other required language baked into graphics. According to industry data, automated CV moderation can reduce the need for manual review by an impressive 95% [4].

Hybrid systems that combine CV and NLP are especially useful for spotting "context collisions" – situations where an image paired with specific text creates a compliance issue.

Hybrid AI Models and Rule-Based Engines

Since no single model is flawless, integrating rule-based systems with AI models adds an extra layer of reliability. While NLP and CV excel at analyzing unstructured content, they aren’t perfect. Models can generate false positives, miss subtle violations, or drift over time. Rule-based engines act as a safeguard, providing deterministic checks that enforce specific requirements without exception.

Here’s how the combination works: AI models handle tasks like identifying patterns and assigning confidence scores, while rule-based systems step in as a final checkpoint for critical, non-negotiable requirements. For example, if a disclosure is missing, the rule-based system flags it with a clear pass/fail result.

| Feature | Machine Learning | Rule-Based Systems |

|---|---|---|

| Strength | Interprets context and detects patterns | Strictly enforces specific rules and terms |

| Weakness | May generate errors or drift over time | Struggles with nuanced or evolving language |

| Compliance Role | Identifies sensitive themes and processes large datasets | Ensures required disclosures and blocks prohibited terms |

| Output | Probabilistic (e.g., "80% likely to be non-compliant") | Deterministic (e.g., "Disclosure missing: Fail") |

Companies using hybrid systems for real-time monitoring report being 34% more likely to see revenue growth and 65% more likely to cut costs [5]. This makes a strong case for moving beyond traditional, manual compliance methods.

How to Set Up an AI-Powered Compliance System

Setting Compliance Parameters for Your Brand

Start by defining the specific compliance needs for your brand. This includes tone, vocabulary, mandatory disclaimers, and any industry-specific guidelines. These elements are often consolidated into a "policy pack" or a brand voice matrix [7][9].

Next, categorize your content based on its risk level:

- Low-risk content like social media captions or email subject lines can be primarily managed by AI and content marketing tools.

- Medium-risk content such as blog posts or ad copy should be reviewed by humans before publishing.

- High-risk content like legal statements, crisis communications, or sensitive announcements should remain entirely human-generated, without AI involvement [10].

"Guidelines act as your brand’s immune system for AI-generated content." – AI-Ready CMO Editorial Team [10]

Once you’ve established these categories, embed the rules into your AI system’s prompts and workflows. This means specifying banned phrases, mandatory disclosures, and reading-level requirements, ensuring all AI outputs align with your brand’s standards. Research shows that AI systems trained with clear brand parameters can boost audience engagement by up to 40% [9]. While setting this up requires effort, the long-term benefits make it worthwhile.

With these parameters in place, the next step is integrating human oversight to ensure compliance stays on track.

Building a Human-AI Review Workflow

A reliable compliance system combines AI efficiency with human judgment. AI can handle large volumes of content, but humans are essential for nuanced decisions.

AI tools can assign a confidence score to each piece of content. Here’s how this process typically works:

- High-confidence violations are automatically flagged or corrected by the system.

- Medium-confidence issues are sent to human reviewers for further evaluation.

- Low-confidence items pass through with minimal intervention [1].

This triage system keeps the workflow efficient while minimizing risks. For content flagged for human review, assigning tasks to the right team members is key. Tone reviews, legal checks, and factual accuracy should be handled by brand reviewers, legal experts, and subject matter specialists, respectively [11]. Providing reviewers with a simple checklist – such as "Does this contain a medical claim? Y/N" – helps maintain consistency across the team.

"The goal is not just to catch bad outputs. The goal is to create a governance system that proves why an output was approved, who approved it, and which checks it passed." – Ethan Cole, Senior SEO Content Strategist [11]

Establish clear Service Level Agreements (SLAs) to ensure human reviewers respond promptly. With AI streamlining the process, content review cycles can be sped up by 30–50% [6]. Together, these AI and human review layers create a comprehensive compliance system.

Tracking and Improving Compliance Performance

Setting up the system is just the beginning. Regular monitoring and updates are essential to keep everything running smoothly. Plan audits every 4–12 weeks to identify and address any performance issues before they escalate [1].

Override data – categorized by failure type (e.g., false positives, missed violations, or tone mismatches) – can be used to retrain the AI model [6][1]. Automated quality assurance processes can cut down manual revisions by as much as 70%, but this only works if the system is actively maintained [8].

Maintain an immutable audit log that records every AI decision, human reviewer action, and override reason. This practice is not only smart but also crucial for regulatory defense if your content faces scrutiny from legal or regulatory bodies [6][1]. By consistently tracking and refining your system, you’ll ensure it evolves alongside regulatory changes and your brand’s needs.

Benefits and Challenges of AI in Content Compliance

Advantages of Using AI for Compliance

AI compliance tools bring an incredible mix of speed and scale to the table. These tools can monitor millions of assets across various channels and languages, catching non-compliant content in under 20 milliseconds – before it even reaches a user. This real-time detection eliminates the need for post-publication audits, making the process far more efficient [2][14][15]. Companies using this runtime enforcement have reported a 50% drop in manual governance workload [14], freeing up human reviewers to focus on complex, judgment-heavy decisions.

Another key strength of AI is its consistency. Human reviewers, no matter how skilled, can vary in their application of rules over time or in different scenarios. AI, on the other hand, applies the same standards uniformly across all regions and business units [13][15]. This consistency is especially important in highly regulated industries where even small errors – like a missing disclosure or incorrect terminology – can lead to serious regulatory repercussions [2].

"AI compliance copilots enforce governance at runtime – not after the fact – intercepting interactions, evaluating policies, and blocking violations in under 20ms." – Trussed AI [14]

Despite these advantages, there are challenges that organizations must navigate carefully.

Challenges and Risks to Keep in Mind

One of the biggest hurdles is algorithmic bias. If the datasets used to train AI models are skewed or incomplete, the AI may flag content unfairly or fail to catch violations that fall outside its training scope [16]. As John Kim from Control Risks points out:

"Today’s well-tuned model could be tomorrow’s added risk vector if underlying datasets or business processes change." [16]

Another issue is hallucinations – a quirk of large language models. These systems don’t truly "understand" regulations; they predict text based on patterns, which can lead to incorrect or misleading outputs. This is particularly risky in sectors like healthcare or finance, where precision is non-negotiable.

The problem of shadow AI amplifies these risks. A striking 69% of cybersecurity leaders suspect that employees use unauthorized public AI tools at work [14]. These off-the-radar tools can create blind spots, potentially leading to data leaks or unmonitored compliance breaches.

The financial implications of these risks are massive. In 2024, the global average cost of a data breach reached $4.88 million, with financial firms facing an even higher average of $6.08 million [14].

While AI shines in handling large volumes and maintaining consistency, it’s not a standalone solution. High-stakes decisions still require clear rules and human oversight. Organizations that conduct regular AI audits are more than three times as likely to see successful outcomes from their AI programs compared to those that don’t [14].

Conclusion

Key Takeaways on AI for Content Compliance

AI has transformed content compliance, cutting review times from days to mere milliseconds with automated, real-time enforcement. This shift from reactive audits to proactive monitoring isn’t just about technology – it’s a game-changer for strategy.

Studies show that runtime AI enforcement dramatically reduces the need for manual oversight. Nearly 50% of organizations predict AI-driven compliance will become the norm by 2026 [12]. At the same time, the stakes are higher than ever, with the global average cost of a data breach reaching $4.88 million in 2024 [14]. While the advantages are clear, challenges still persist.

That said, AI isn’t a magic bullet. As Charlotte Baxter-Read from Markup AI explains:

"AI models don’t go to law school. They don’t ‘know’ regulations. They simply predict the next word in a sentence." [3]

To address this, combining AI with human expertise is key. AI excels at high-volume tasks like flagging restricted terms or adding required disclaimers, but it lacks the nuance needed for complex decisions. A hybrid model ensures both efficiency and accuracy – AI boosts your capacity, while human judgment provides the critical oversight needed to navigate regulatory intricacies.

FAQs

What content should never be approved by AI alone?

Content such as synthetic media, deepfakes, or AI-generated influencer material shouldn’t rely solely on AI for approval. It’s crucial to include proper disclosures and adhere to regulations, like the labeling requirements outlined in the EU AI Act and similar frameworks. This approach helps ensure both ethical practices and legal compliance.

How do I set confidence thresholds for AI compliance reviews?

Confidence thresholds determine how certain an AI system must be before approving or flagging content. Here’s how to approach this:

- Define acceptable confidence levels: Tailor these levels based on the sensitivity of the content and any applicable regulations. For instance, highly sensitive content may require stricter thresholds.

- Incorporate thresholds into moderation workflows: Set up processes where flagged content can be sent for manual review if it falls below the defined threshold.

- Monitor and refine thresholds regularly: Continuously evaluate performance metrics and adjust thresholds to keep up with changing compliance standards and system accuracy.

This approach ensures the AI operates effectively while maintaining alignment with regulations and performance goals.

What should I log to prove compliance during an audit?

To demonstrate compliance during an audit, it’s essential to keep thorough records of your AI-related activities. Start by logging detailed information about AI training data, including its origin and how it was created. Similarly, document the processes behind content creation and any edits made to AI-generated material, ensuring you include the editorial history.

Additionally, keep records of internal policies that address ethical data use, transparency, and human oversight. These should be paired with documentation of compliance checks and evaluations for potential bias. It’s also vital to maintain logs for content labeling and certifications, especially as regulations evolve – such as requirements for labeling AI-generated content. This proactive approach helps ensure you’re prepared to meet current and future standards.